Here we introduce ManyFaces, a recently formed big team science group for face perception and face recognition research. This symposium will introduce the scope and aims of ManyFaces and highlight the work of two of the working groups within ManyFaces: stimulus meta-database and stimulus collection.

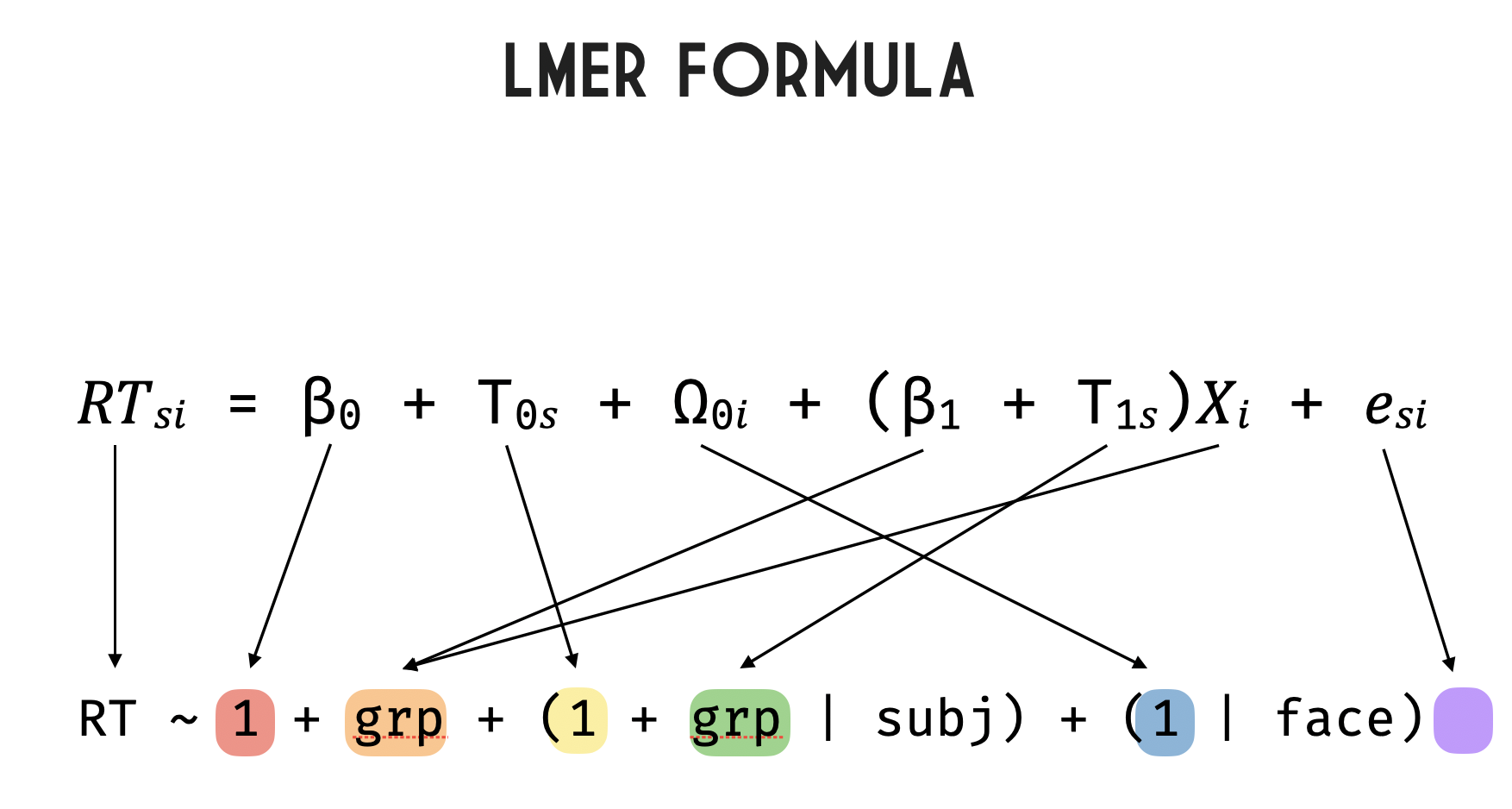

Broadly, the aim of ManyFaces is to improve, diversify, and crowdsource key aspects of face research, including perception and recognition. This involves, for example, the collection and use of face stimuli; sharing existing stimulus sets; standardising stimulus collection procedures; and organising stimulus collection across multiple labs to obtain larger and more diverse face stimulus sets. ManyFaces also aims to crowdsource data collection across our members’ labs to test key research questions in face perception and recognition, enabling larger-scale designs and more diverse participant samples and generalisable findings. Finally, we aim to organise training workshops for key methods (e.g., morphing) and analyses (e.g., mixed effects models) used in face research.

The stimulus meta-database working group has compiled a guide to face stimulus meta-databases and resource lists. Various researchers have created lists or meta-databases documenting the broad variety of face stimulus sets that are available for research use. However, these lists vary in how comprehensive they are and in the type of information they provide about each stimulus set. Our guide therefore provides an overview of the most useful of these lists, noting key information such as the kinds of stimuli included in each list, the information provided about each stimulus set, the user friendliness of the list, and the degree of overlap among lists. This guide should aid researchers in finding the most appropriate stimuli for their research and is now publicly available on the Open Science Framework (https://osf.io/mbqt3/). This working group is also currently surveying ManyFaces members about any face stimulus sets they have and are willing to share directly with other researchers, with the aim of compiling a guide to stimulus sets that cannot be found via existing lists and databases.

The stimulus collection working group is working toward the goals of understanding what kinds of stimuli face researchers need to address their research questions and how to acquire these stimuli in a standardised and reproducible manner. This will involve surveying members about their stimulus needs and the stimulus collection equipment they have, developing guides to stimulus collection (e.g., photography/video setup, ethics templates/consent forms), and eventually organising the collection, storage, and distribution of stimuli.

Altogether, ManyFaces is in its beginning stages but is taking steps toward more reproducible, generalisable, and inclusive face research practices.